Bio

I am a PhD student at the University of Chicago, fortunately advised by prof Ce Zhang. I finished my master study in CS at ETH Zurich. Before the master’s, I spent a year working on building search engines at ByteDance as a MLE. I graduated from NYU with honors in CS and was awarded with Prize for Outstanding Performance in CS.

I’ve spent time at Meta AI GenAI as an AI resident, working on code, long context LLMs, and 3D computer vision. I also interned as research scientist at Nvidia working on diffusion language models and hybrid architecture. I’ll join ByteDance Seed as a research scientist exploring LLM pre-training and long context modeling.

{first_name}6 AT uchicago DOT edu

Feel free to drop me an email for anything, especially for potential collaboration!!

- Large language models & NLP

- AI systems

- Science of foundation models

PhD Student in CS, 2024 - Present

University of Chicago

MS in Computer Science, 2024

ETH Zurich

BA in Computer Science with Honors, 2020

New York University

Updates

[2026.06] I’ll be joining ByteDance Seed as a research scientist intern this summer.

[2026.05] New paper on tri-mode diffusion models got released! Check out the tech report and HF space.

[2026.05] Our paper TiDAR got accepted to MLsys as Oral presentation!

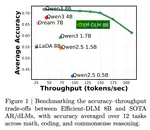

[2026.04] Three papers got accepted to ICML 2026! Checkout PPD, Efficient-DLM, and Scaling Diffusion LLMs.

[2026.03] Checkout our new work - PPD disaggregation, which adaptively routes prefill operation after the first-turn to prefill or prefill-capable decode nodes during LLM inference (simple but effective in satisfying various SLOs).

[2026.02] New paper Scaling Beyond Masked Diffusion Language Models out! Scaling laws on different families of diffusion LMs: perlexity != downstream performance.

[2026.01] ASAP Seminar Talk and Discrete Diffusion Reading Group Talk on TiDAR as well as demo webiste are out.

[2025.11] TiDAR at Nvidia is out! As a sequence-level hybrid model that conducts parallel diffusion drafting and autoregressive sampling in a single forward, TiDAR is the first architecture to close the quality gap with AR models while delivering 4.71x to 5.91x more tokens per second. Stay tuned for the SGLang inference code release.

[2025.05] We introduce HAMburger, a new model that redefines resource allocation for LLMs by generating multiple tokens per step with a single KV cache.

[2025.05] Speculative Prefill got accepted by ICML 2025! Feel free to try our code here.

[2025.03] I will join the Inference Optimization team at Nvidia as a research scientist intern in summer 2025.

Show More

[2025.02] New work released called Speculative Prefill, which increases LLM inference TTFT and maximal QPS! Feel free to check the paper and code.

[2024.10] Our survey paper got accepted by TMLR 2025!

[2024.09] I’m starting my PhD at Uchicago, working with professor Ce Zhang.

[2024.08] Our paper got accepted by WACV 2025!

Experience

Research on Diffusion LLMs:

- Scaling different types of DLLMs

- Model distillation

- Developing adaptive caching mechanism

- Hybrid architecture

Research on large language models:

- CodeLlama: SOTA open sourced code generation LLMs

- Llama 2 Long: effective context length extension of Llama 2 up to 32K

Research on 3D computer vision:

- Semantic 3D indoor scene synthesis, reasoning, and planning

- Text-guided 3D human generation

Papers

Academic Service

Reviewer for NeurIPS 2026

Reviewer for ICLR 2026

Reviewer for ICML 2025, 2026 (Gold Reviewer)

Reviewer for How Far Are We From AGI @ ICLR 2024

Reviewer for Long-Context Foundation Models (LCFM) @ ICML 2024

Miscellaneous

I was first trained as a game designer at NYU Game Center during my undergrad and became increasingly more interested in CS and AI. Despite that, I’m still very interested in game dev, physically-based rendering, and game AI.

During my free time, I enjoy playing chess (my favorite live-stream), electric guitars (my favorite instrumental band), and recently got obsessed with golf (a group of chilled golfers).